Three criteria.

One defensible answer.

AIIRS evaluates tasks across three independent dimensions.

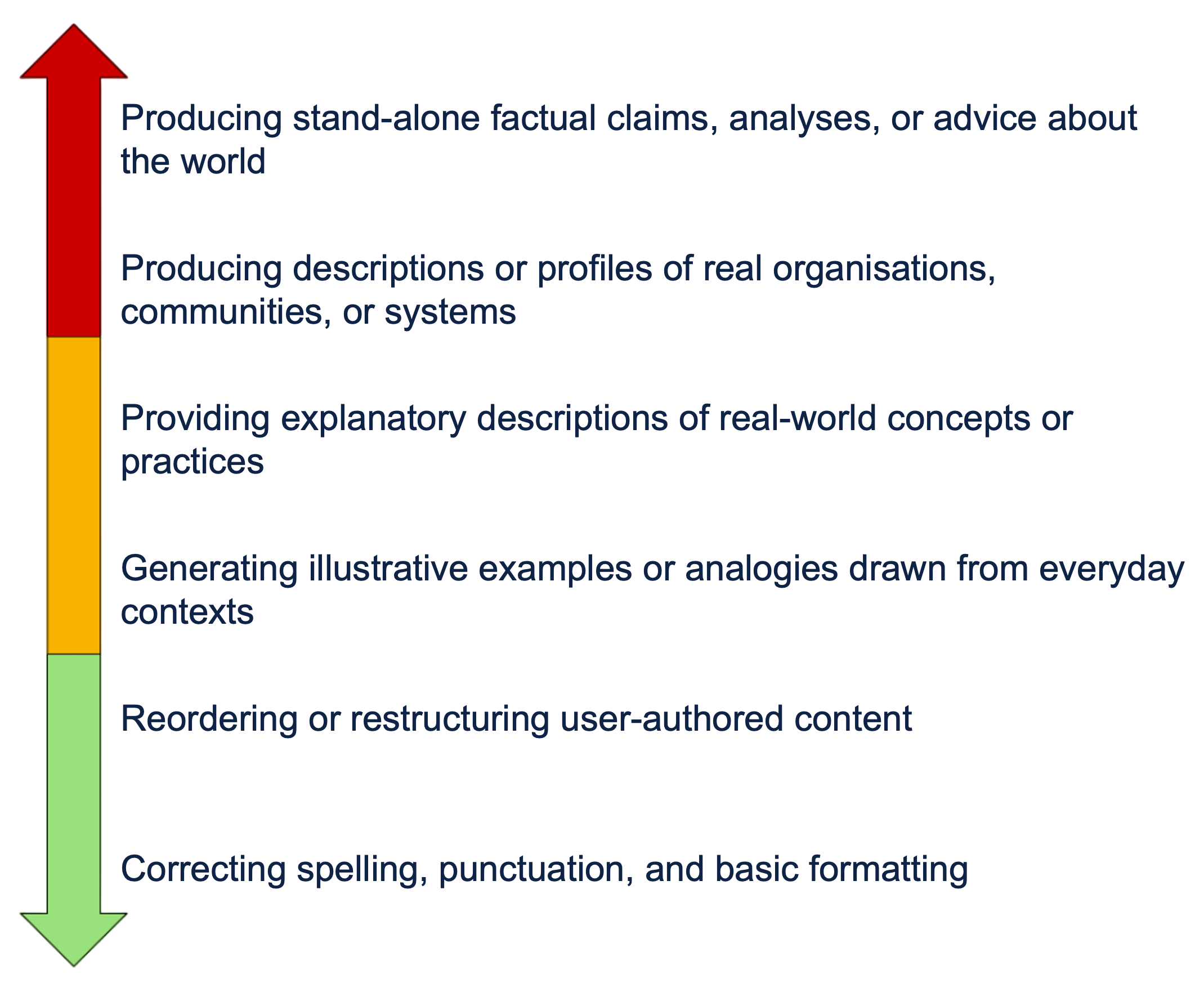

Epistemic Dependence

Epistemic dependence captures whether a task requires the system's representations of the world to be correct in order for the task outcome to be usable.

Tasks with lower epistemic dependence rely only on user-provided material, without requiring the system's representations of the world to be correct for the task outcome to be usable.

Tasks with higher epistemic dependence require the system's representations of the world to be correct for the task outcome to be usable.

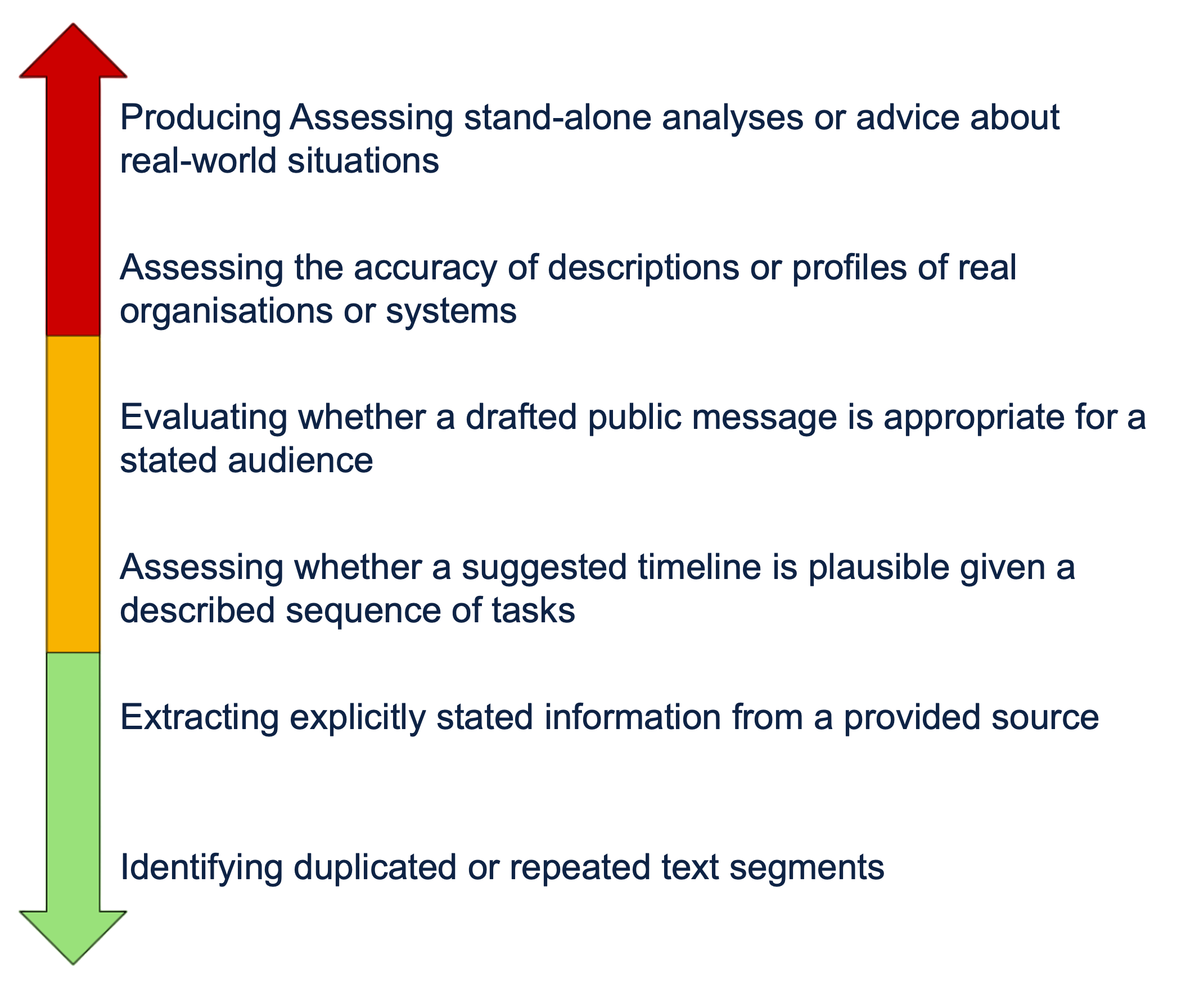

Verifiability

Verifiability captures the basis on which the correctness of a GenAI system's output can be verified for the task.

Verifiability is assessed independently of consequences. A task may be high risk due to unsourced verifiability, even where the immediate consequences of error are limited.

Tasks with embedded verifiability enable quick, reliable verification by the user or the surrounding process, without requiring specific domain expertise.

Tasks requiring expert verifiability depend on specialised expertise or external investigation that requires evaluative judgement.

Consequences of Error

The consequences of error reflect the extent to which incorrect, misleading, or incomplete GenAI outputs affect decisions, records, or outcomes related to the task.

Tasks with minimal consequences of error are those in which errors have minimal impact on understanding or outputs and do not affect decisions, records, or outcomes relating to people beyond the task.

Tasks with significant consequences of error are those in which errors affect decisions about people, alter records relating to them, or compromise outputs that have consequences for individuals or groups beyond the task.